Today’s post on Big Data is authored by Anthony Watson, CIO of Europe, Middle East Retail & Business Banking at Barclays Bank. It is thought-provoking take on ‘Big Data’ and how best to effectively use it. Please look past the atrocious British spelling :). We look forward to your comments and perspective. Best, Jim Ditmore

In March 2013, I read with great interest the results of the University of Cambridge analysis of some 58,000 Facebook profiles. The results predicted unpublished information like gender, sexual orientation, religious and political leanings of the profile owners. In one of the biggest studies of its kind, scientists from the university’s psychometrics team developed algorithms that were 88% accurate in predicting male sexual orientation, 95% for race and 80% for religion and political leanings. Personality types and emotional stability were also predicted with accuracy ranging from 62?75%. The experiment was conducted over the course of several years through their MyPersonality website and Facebook Application. You can sample a limited version of the method for yourself at http://www.YouAreWhatYouLike.com.

Not surprisingly, Facebook declined to comment on the analysis, but I guarantee you none of this information is news to anyone at Facebook. In fact it’s just the tip of the iceberg. Without a doubt the good people of Facebook have far more complex algorithms trawling, interrogating and manipulating its vast and disparate data warehouses, striving to give its demanding user base ever richer, more unique and distinctly customised experiences.

As an IT leader, I’d have to be living under a rock to have missed the “Big Data” buzz. Vendors, analysts, well-?intentioned executives and even my own staff – everyone seems to have an opinion lately, and most of those opinions imply that I should spend more money on Big Data.

It’s been clear to me for some time that we are no longer in the age of “what’s possible” when it comes to Big Data. Big Data is “big business” and the companies that can unlock, manipulate and utilise data and information to create compelling products and services for their consumers are going to win big in their respective industries.

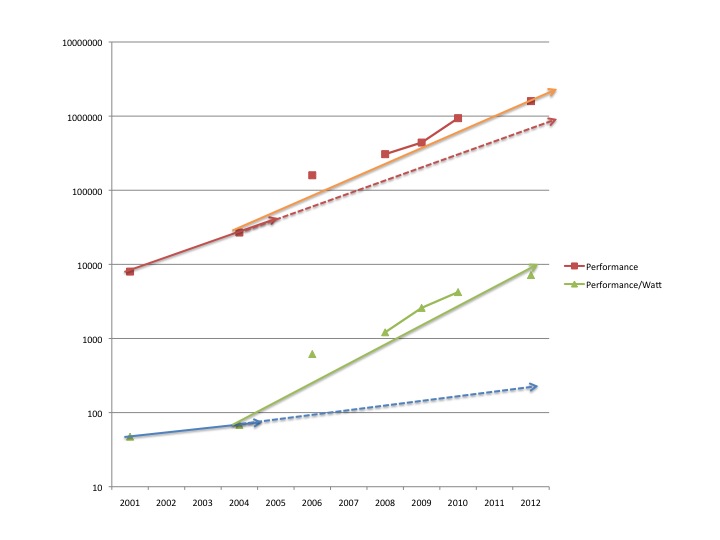

Data flow around the world and through organisations is increasing exponentially and becoming highly complex; we’re dealing with greater and greater demands for storing, transmitting, and processing it. But in my opinion, all that is secondary. What’s exciting is what’s being done with it to enable better customer service and bespoke consumer interactions that significantly increase value along all our service lines in a way that was simply not possible just a few years ago. This is what’s truly compelling. Big Data is just a means to an end, and I question whether we’re losing sight of that in the midst of all the hype.

Why do we want bigger or better data? What is our goal? What does success look like? How will we know if we have attained it? These are the important questions and I sometimes get concerned that – like so often before in IT – we’re rushing (or being pushed by vendors, both consultants and solution providers alike) to solutions, tools and products before we really understand the broader value proposition. Let’s not be a solution in search of a problem. We’ve been down that supply-centric road too many times before.

For me it’s simple; Innovation starts with demand. Demand is the force that drives innovation. However this should not be confused with the axiom “necessity is the mother of invention”. When it comes to technology we live in a world where invention and innovation are defining the necessity and the demand. It all starts with a value experience for our customers. Only through a deep understanding of what “value” means to the customer can we truly be effective in searching out solutions. This understanding requires an open mind and the innovative resolve to challenge the conventions of “how we’ve always done it.”

Candidly I hate the term “Big Data”. It is marketing verbiage, coined by Gartner that covers a broad ecosystem of problems, tools, techniques, products, and solutions. If someone suggests you have a Big Data problem, that doesn’t say much as arguably any company operating at scale, in any industry, will have some sort of challenge with data. But beyond tagging all these challenges with the term Big Data, you’ll find little in common across diverse industries, products or services.

Given this diversity across industry and within organisations, how do we construct anything resembling a Big Data strategy? We have to stop thinking about the “supply” of Big Data tools, techniques, and products peddled by armies of over eager consultants and solution providers. For me technology simply enables a business proposition. We need to look upstream, to the demand. Demand presents itself in business terms. For example in Financial Services you might look at:

- Who are our most profitable customers and, most importantly, why?

- How do we increase customer satisfaction and drive brand loyalty?

- How do we take excess and overbearing processes out of our supply chain and speed up time to market/service?

- How do we reduce our losses to fraud without increasing compliance & control costs?

Importantly, asking these questions may or may not lead us down a Big Data road. But we have to start there. And the next set of questions is not about the solutions but framing the demand and potential solutions:

- How do we understand the problem today? How is it measured? What would improvement look like?

- What works in our current approach, in terms of the business results? What doesn’t? Why? What needs to improve?

- Finally, what are the technical limitations in our current platforms? Have new techniques and tools emerged that directly address our current shortcomings?

- Can we develop a hypothesis, an experimental approach to test these new techniques, so that they truly can deliver an improvement?

- Having conducted the experiment, what did we learn? What should we abandon, and what should we move forward with?

There’s a system to this. Once we go through the above process, we start the cycle over. In a nutshell, it’s the process of continuous improvement. Some of you will recognise the well?known cycle of Plan, Do, Check, Act (“PDCA”) in the above.

Continuous improvement and PDCA are interesting, in that they are essentially the scientific method applied to business and one of the notable components of the Big Data movement is the emerging role of the Data Scientist.

So, who can help you assess this? Who is qualified to walk you through the process of defining your business problem and solving them through innovative analytics? I think it is the Data Scientist.

What’s a Data Scientist? It’s not a well?defined position, but here would be an ideal candidate:

- Hands?on experience with building and using large and complex databases, relational and non-relational, and in the fields of data architecture and information management more broadly

- Solid applied statistical training, grounded in a broader context of mathematical modeling.

- Exposure to continuous improvement disciplines and industrial theory.

- Most Importantly: Functional understanding of whatever industry is paying their salary i.e., Real world operational experience – theory is valuable; “scar tissue” is essential.

This person should be able to model data, translate that model into a physical schema, load that schema from sources, and write queries against it, but that’s just the start. One semester of introductory stats isn’t enough. They need to know what tools to use and when, and the limits and trade?offs of those tools. They need to be rigorous in their understanding and communication of confidence levels in their models and findings, and cautious of the inferences they draw.

Some of the Data Scientist’s core skills are transferrable, especially at the entry level. But at higher levels, they need to specialise. Vertical industry problems are rich, challenging, and deep. For example, an expert in call centre analytics would most certainly struggle to develop comparable skills in supply chain optimisation or workforce management.

And ultimately, they need to be experimentalists – true scientists engaged in a quest for knowledge on behalf of their company or organisation with an unresolvable sense of curiosity: engaged in a continuous cycle of:

- examining the current reality,

- developing and testing hypotheses, and

- delivering positive results for broad implementation so that the cycle can begin again.

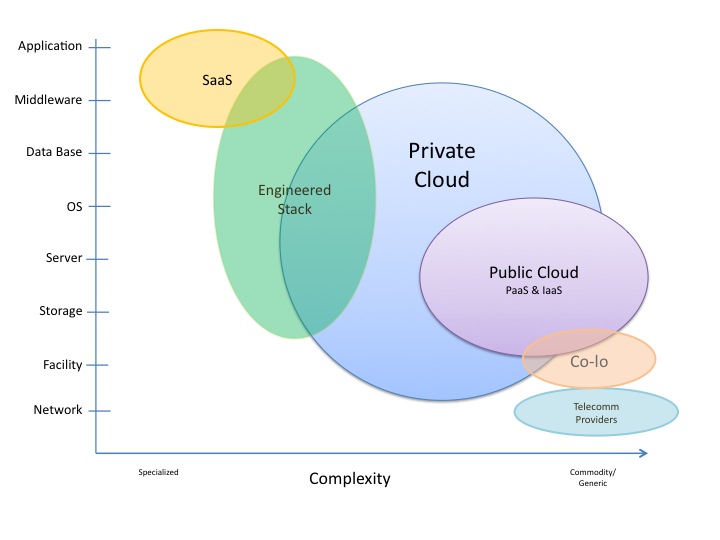

There are many sectors we can apply Big Data techniques to: financial services, manufacturing, retail, energy, and so forth. There are also common functional domains across the sectors: human resources, customer service, corporate finance, and even IT itself.

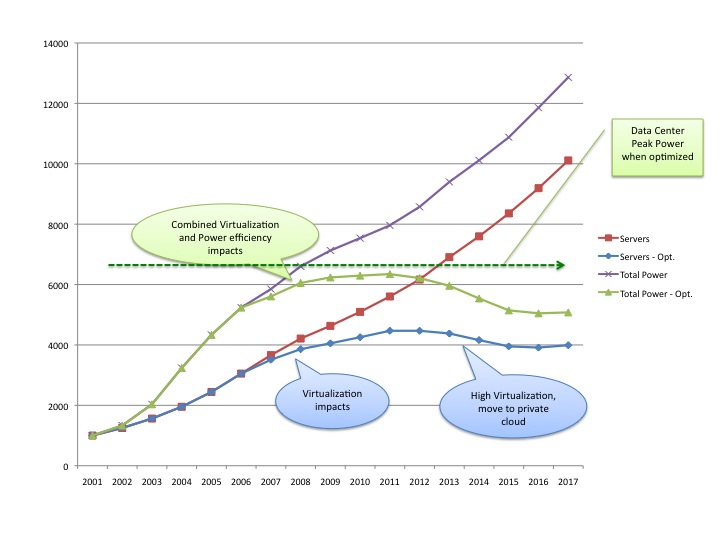

IT is particularly interesting. It’s the largest consumer of capital in most enterprises. IT represents a set of complex concerns that are not well understood in many enterprises: projects, vendors, assets, skilled staff, and intricate computing environments. All these come together to (hopefully) deliver critical and continuous value in the form of agile, stable and available IT services for internal business stakeholders, and most importantly external customers.

Given the criticality of IT, it’s often surprising how poorly managed IT is in terms of data and measurement. Does IT represent a Big Data domain? Yes, absolutely. From the variety of IT deliverables and artefacts and inventories, to the velocity of IT events feeding management consoles, to the volume of archived IT logs, IT itself is challenged by Big Data. IT is a microcosm of many business models. We in IT don’t do ourselves any favours starting from a supply perspective here, either. IT’s legitimate business questions include:

- Are we getting the IT we’re paying for? Do we have unintentional redundancy in what we’re buying? Are we paying for services not delivered?

- Why did that high severity incident occur and can we begin to predict incidents?

- How agile are our systems? How stable? How available?

- Is there a trade-off between agility? stability? and/or availability? How can we increase all three?

With the money spent on IT, and its operational criticality, Data Scientists can deliver value here as well. The method is the same: understand the current situation, develop and test new ideas, implement the ones that work, and watch results over time as input into the next round.

For example, the IT organisation might be challenged by a business problem of poor stakeholder trust, due to real or perceived inaccuracies in IT cost recovery. In turn, it is then determined that these inaccuracies stem from poor data quality for the IT assets on which cost recovery is based.

Data Scientists can explain that without an understanding of data quality, one does not know what confidence a model merits. If quality cannot be improved, the model remains more uncertain. But often, the quality can be improved. Asking “why” – perhaps repeatedly – may uncover key information that assists in turn with developing working and testable hypotheses for how to improve. Perhaps adopting master data management techniques pioneered for customer and product data will assist. Perhaps measuring the IT asset data quality trends over time is essential to improvement – people tend to focus on what is being measured and called out in a consistent way. Ultimately, this line of inquiry might result in the acquisition of a toolset like Blazent, which provides IT analytics & data quality solutions enabling a true end?to-end view of the IT ecosystem. Blazent is a toolset we’ve deployed at Barclays to great effect.

Similarly, a Data Scientist schooled in data management techniques, and with an experimental, continuous improvement orientation might look at an organisation’s recurring problems in diagnosing and fixing major incidents, and recommend that analytics be deployed against the terabytes of logs accumulating every day, both to improve root cause analysis, and ultimately to proactively predict outage scenarios based on previous outage patterns. Vendors like Splunk and Prelert might be brought in to assist with this problem at the systems management level. SAS has worked with text analytics across incident reports in safety-?critical industries to identify recurring patterns of issues.

It all starts with business benefit and value. The Big Data journey must begin with the end in mind, and not rush to purchase vehicles before the terrain and destination is known. A Data Scientist, or at least someone operating with a continuous improvement mind-?set who will champion this cause, is an essential component. So, rather than just talking about “Big Data,” let’s talk about “demand-?driven data science.” If we take that as our rallying cry and driving vision, we’ll go much further in delivering compelling, demonstrable and sustainable value in the end.

Best, Anthony Watson